The title of a now-deleted Reddit post read simply: “V2 of a Paul Chadeisson model I’ve been training”. Just below sat three digital renderings of mountain-sized sci-fi cityscapes. Zooming in on them exposed finer details as the glitchy outputs of an artificial intelligence prompt-based image program, but they were nonetheless impressive. Set in a conventional perspective, the gargantuan structures imparted the same feeling of awe and excitement of the best sci-fi visions.

The renderings were stylistic replications of the work of Paul Chadeisson, a freelance conceptual artist who’s worked on major film, video game, and streaming productions like Black Adam, Cyberpunk 2077, Love, Death & Robots, and the upcoming Dune: Part II. The user who created the now-deleted images had done so by training an AI model explicitly on Chadeisson’s work. But, posting them on the social media platform kicked off a heated debate between Reddit community members who thought the model was an ethical step too far, and those who considered it a victimless creative endeavor. Chadeisson himself got wind of the model and chimed in on the post as well.

“As the owner of the images you are using to develop this model, I think I have my [word] to say,” wrote Chadeisson in reply to the original post. “This model seems like a really fun tool [for my] personal use, but in the hand of others, it seems completely unhealthy, especially without my consent and authorization. Those images are under copyright and you are not allowed to use [them] in any way.”

This Reddit discussion is a microcosm of a growing debate on AI art, and there’s no lack of strident opinions in either camp. AI image generation tools are only getting better at what they do, which means concerns about ethical use, copyright law and infringement, and what it means to be an artist and a human are only going to get more pressing as time passes. Much depends on how we navigate the resulting implications.

Ghost in the machine

That AI can completely shake up industries and cause people to question humanity’s role in areas where it’s being implemented is nothing new. But never before has the technology so directly touched on some of the things that we often deem the exclusive and sacred territory of what some call the soul — art and creative expression. Most people share the fear that Douglas Hofstader, the Pulitzer Prize-winning author of Gödel, Escher, Bach: An Eternal Golden Braid, once expressed: if artistic minds capable of producing unrivaled works of subtlety, complexity, and depth can be trivialized by a small chip, it would lay waste to their sense of what it is to be human.

And yet here we are, confronted with the very essence of that existential worry. AI art generators like DALL-E, MidJourney, and Stable Diffusion have opened up something of a Pandora’s Box. But one of main the reasons this kind of art and the tools associated with it have caused such a fervent reaction among artists and art lovers arguably stems from a misconception of how they work.

So, how do AI-generated art programs work?

On a technical level, AI image generation requires the use of Generative Adversarial Networks (GAN). This type of network actually involves two neural networks; one to create an image and another to analyze how close the image is to the real thing based on reference images taken from the internet. After the second network creates a score for the image’s accuracy, it sends that info back to the original AI, which “learns” from this feedback and returns an image to be used in the next scoring round. And by combining artificial neural networks designed to produce images with language processing models used to handle text input, prompt-based image generation was born.

The three biggest players in the AI-generative art game are MidJourney, DALL-E, and Stable Diffusion. Researchers at Google have created Imagen and Parti, but have yet to release them to the public, partly due to concern over how they could be used. MidJourney comes from a startup of the same name and is run by David Holz, DALL-E has its origins in the Elon Musk-funded OpenAI, and the open-source Stable Diffusion is a product of Stability AI’s Founder and CEO Emad Mostaque. In terms of output, images from MidJourney tend to lean more illustrative and paint-like in their aesthetic, Stable Diffusion often touches on a kind of photorealistic surrealism, while DALL-E seems to be capable of keeping one foot in each of those realms.

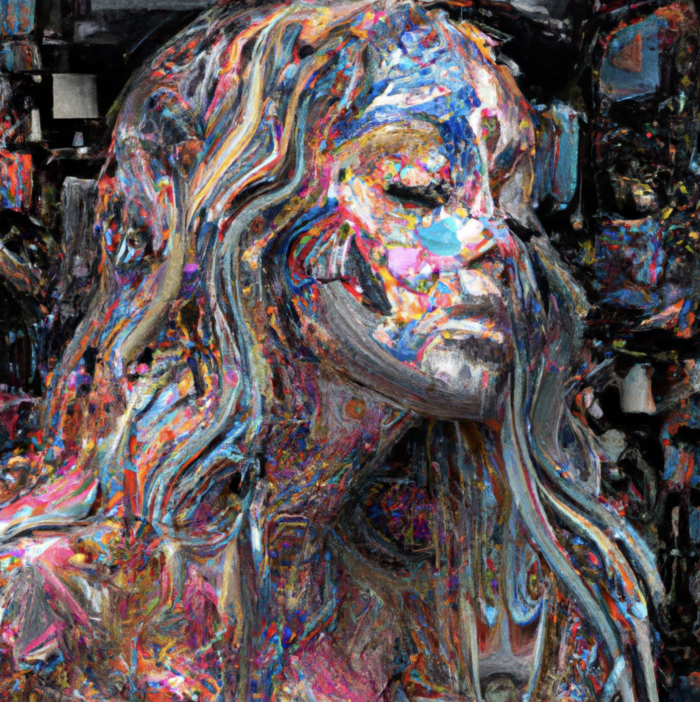

The term “prompt-based” art is one worth unpacking. AI art is a collaborative process, with human and machine inputs weaved together to create the desired product. On MidJourney, for example, a user can type in a string of words and receive four visual outputs in a grid approximating the original idea. From there, users can continue iterating on those outputs, nudging them in a particular conceptual direction, or upscale, refine, and alter the outputs in a co-creational tennis match for hours. And, since language’s power to express ideas and concepts is potentially limitless, the number of potential generative outputs of these programs also approaches infinity, before a user even decides to iterate on the original output.

Perhaps due to the technical complexity and opaque nature of these programs, misconceptions about how they work abound. One of the more common complaints AI-art advocates find themselves pushing back on is the critique that these programs “smash” existing artwork together to form something new.

“I think the biggest problem when it comes to the narrative surrounding AI art is the idea that it steals artwork from people, which is not accurate,” offered the artist Black Label Art Cult (BLAC), an AI art advocate and member of the pro-AI art group AI Infused Art while speaking to nft now. “People sometimes think these programs take existing art, put it into a bucket, stitch bits of it together, and then people sell the result as an NFT. It misrepresents what actually goes on in these programs.”

BLAC hosts a weekly Twitter Space called The New Renaissance in which artists and community members discuss some of the most controversial issues in the AI art world to help dispel common and harmful myths about how the technology works, among other things.

To build a successful AI model, you need to train it on huge amounts of data so the algorithm can learn how to execute a desired function. While most of the companies behind these prompt-based programs have yet to reveal much about the technical details behind how they built their AI models, we know they’re trained on billions of parameters and across billions of images. Stable Diffusion, for example, is trained on a core set of over 2.3 billion pairs of images and text tags that were scraped from the internet.

The key term here is “trained.” There is no massive image database from which these programs pull bits of images to create new art. They have learned to associate text with certain visual components.

“If you ask it for a human, [the program] knows that humans have two hands with five fingers on each hand,” explained Claire Silver, a collaborative AI artist and leading figure in the AI art movement, while speaking to nft now. “It knows that fingers are long and cylindrical and have a bone. It knows that bones [look and] move like this. So, it ‘imagines’ everything that you asked for, based on what it has learned to create something new. And I think that’s important for people to realize, because it’s an entirely different conversation if it pulled from existing work, and it doesn’t.”

Silver is a vocal advocate of AI art tools as part of a new creative revolution that opens the doors of artistic expression for both existing artists and those who aren’t particularly gifted at creating visual artwork. She also hosts popular AI art competitions on Twitter, with the latest one having wrapped up at the end of October. Thousands of people submitted artwork in the 18 days leading up to the competition’s conclusion, and the finalists’ works were displayed at the imnotArt gallery in Chicago.